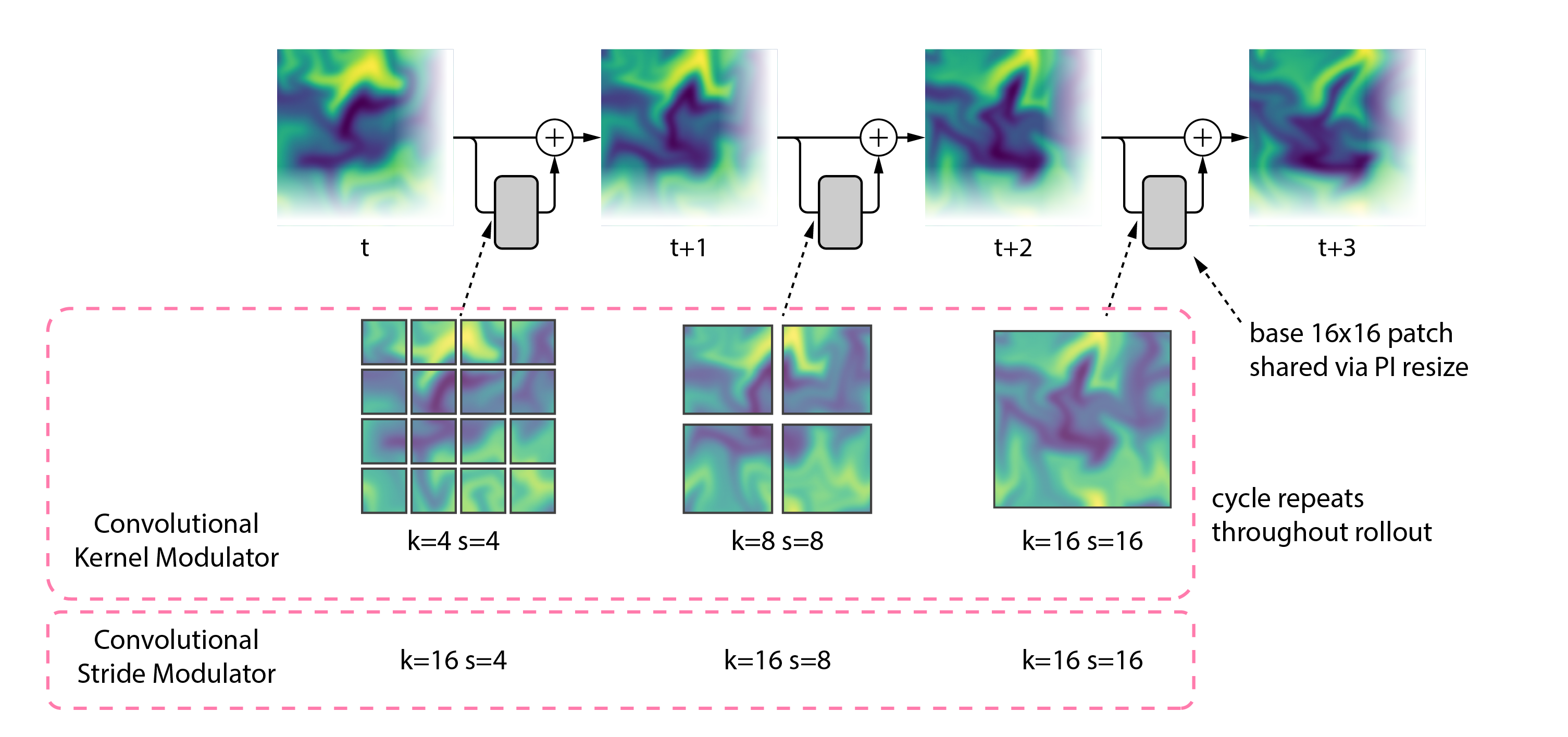

A single PDE transformer can adapt its inference compute budget on demand while producing cleaner long rollouts.

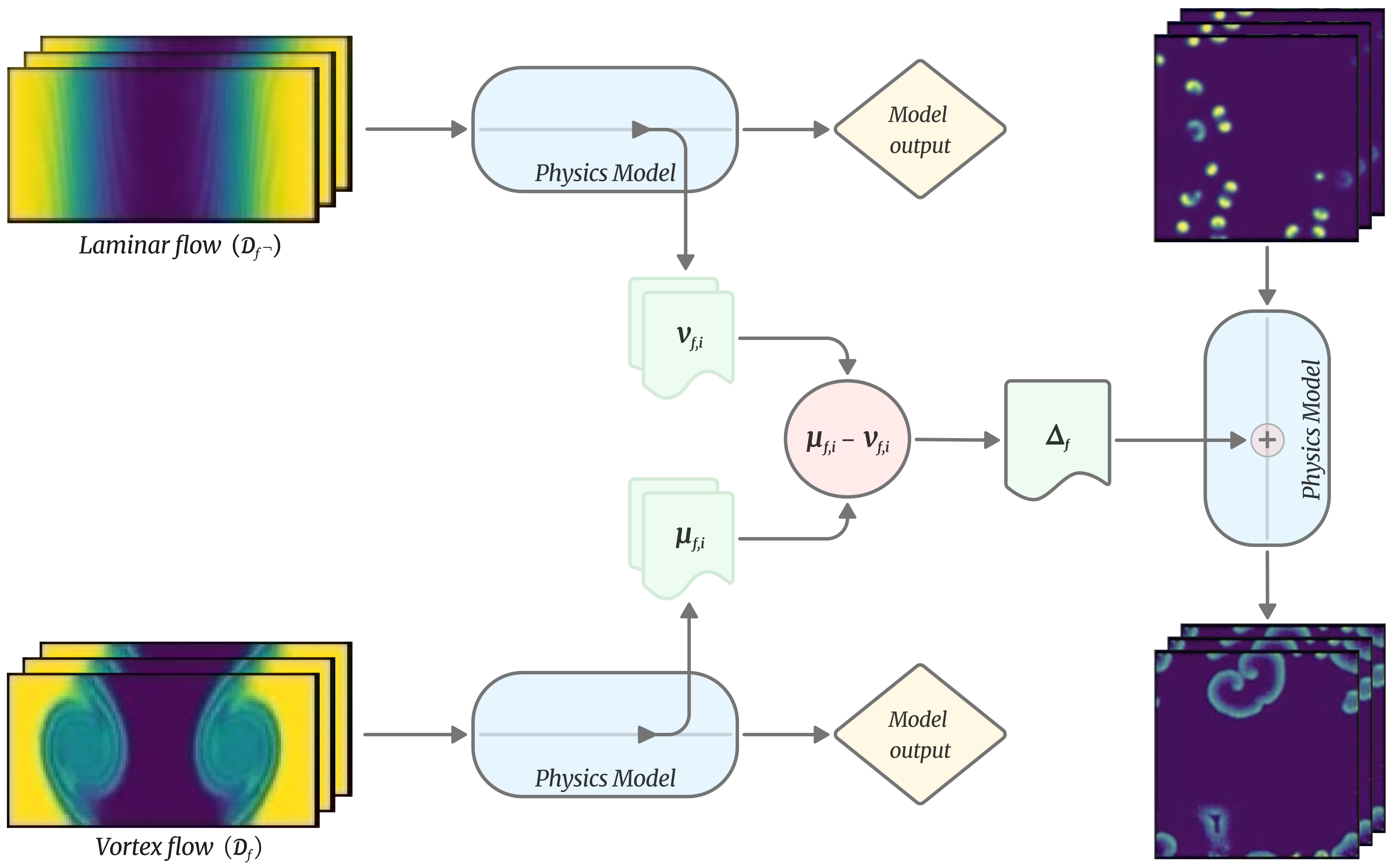

To usher in a new class of machine learning for scientific data, building models that can leverage shared concepts across disciplines. We aim to develop, train, and release such foundation models for use by researchers worldwide.