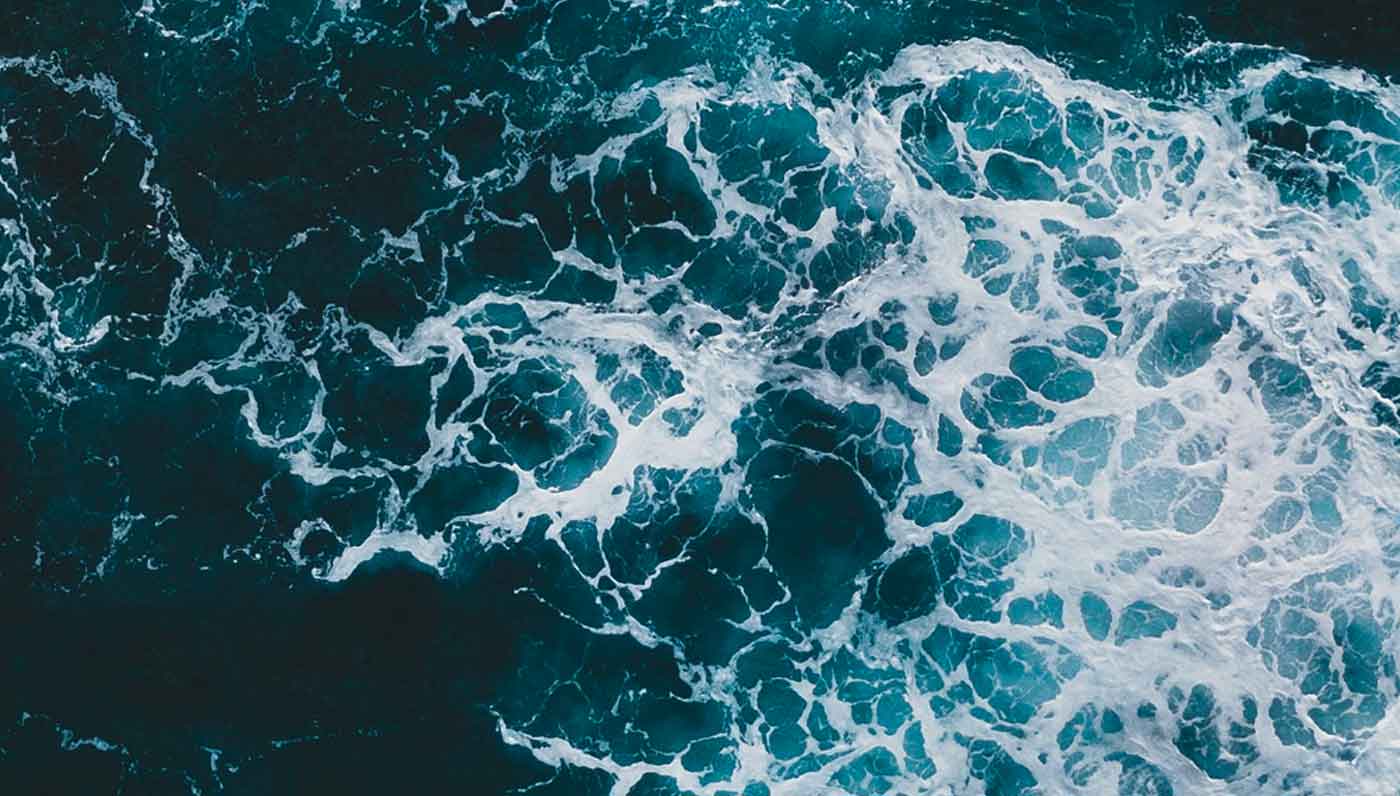

Can a physics foundation model finetuned only on idealized simulation transfer to real laboratory experiments it was never trained on? We test this on Rayleigh-Taylor instability, where simulation and experiment have disagreed on the mixing growth rate for decades, and show that Walrus crosses the divide zero-shot, entering the experimentally observed growth band and shedding independent light on a longstanding debate.